The problem’s plain to see

Too much technology

Machines to save our lives

Machines dehumanize

– From the song Mr. Roboto by Styx (1983)

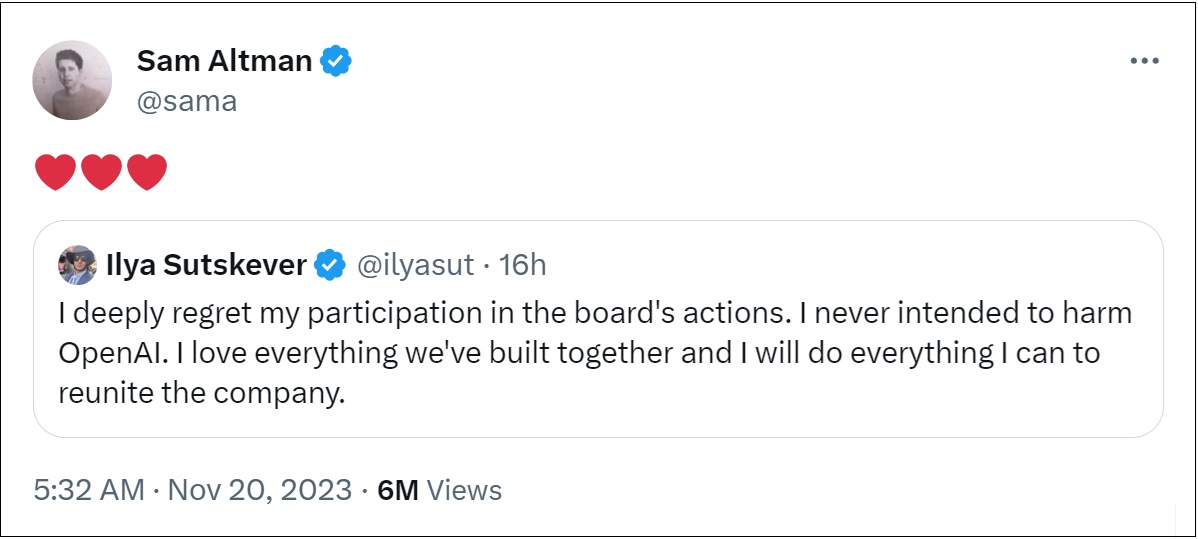

There continues to be a lot of drama around the abrupt firing of Sam Altman on Friday, CEO of Open AI, by the Open AI board of directors.

(The board says Altman had lied to them and lost their trust, but as of Monday night that is all that is all we know).

Altman was a beloved and popular CEO and more than 90% of the 700-some employees at OpenAI are now threatening to quit.

Microsoft is a part of all the drama with a 49% stake in the company, a $13B investment to date and the supplier of critical cloud infrastructure.

Late on Sunday it was announced that Microsoft would hire Altman and Greg Brockman (OpenAI’s president and a company co-founder who quit in solidarity with Altman).

By all accounts he has a moral compass— and must have had valid concerns about what Altman did.

But why did the board double down on their decision when approached by Altman and Satya Nadella (Microsoft CEO) to see if Altman could be reinstated as CEO?

Some Background

By all accounts OpenAI is (was, now?) the 800-lb gorilla in the race for building artificial intelligence models such as GPT-4.

Released in March, Generative Pre-trained Transformer 4 (GPT-4) is a multimodal large language model.

Unlike its predecessors, GPT-4 is a multimodal model: it can take images as well as text as input.

This gives it the ability to describe the humor in unusual images, summarize text from screenshots, and answer exam questions that contain diagrams.

[Source: Wikipedia]

For further down the road, there is the concept of AGI: Artificial General Intelligence.

An artificial general intelligence is a hypothetical type of intelligent agent.

If realized, an AGI could learn to accomplish any intellectual task that human beings or animals can perform.

Some argue that it may be possible in years or decades; others maintain it might take a century or longer; and a minority believe it may never be achieved.

[Source: Wikipedia]

Otto Barten and Joep Meindertsma wrote in July in Time Magazine of a ‘godlike, superintelligent AI computer or agent’:

A superintelligent AI could therefore likely execute any goal it is given.

Such a goal would be initially introduced by humans, but might come from a malicious actor, or not have been thought through carefully, or might get corrupted during training or deployment.

If the resulting goal conflicts with what is in the best interest of humanity, a superintelligence would aim to execute it regardless.

To do so, it could first hack large parts of the internet and then use any hardware connected to it.

Or it could use its intelligence to construct narratives that are extremely convincing to us.

Combined with hacked access to our social media timelines, it could create a fake reality on a massive scale.

As Yuval Harari recently put it: “If we are not careful, we might be trapped behind a curtain of illusions, which we could not tear away—or even realise is there.”

As a third option, after either legally making money or hacking our financial system, a superintelligence could simply pay us to perform any actions it needs from us.

And these are just some of the strategies a superintelligent AI could use in order to achieve its goals.

There are likely many more.

Like playing chess against grandmaster Magnus Carlsen, we cannot predict the moves he will play, but we can predict the outcome: we lose.